Favor Defect Prevention Over Quality Inspection And Correction

In the manufacturing world, you would never find a company that assembles a bunch of parts into a final product before inspecting any of the individual parts, and they would not wait until the end of the assembly line to test for the quality of the product. The very notion of waiting until the product is “done”, to test it, would be appalling. How much time and money would be lost trying to figure out why something didn’t fit together properly, why it didn’t work, and why there was a quality issue with the final product? Rather, we see the manufacturing world taking an active role in preventing defects. Yes, they still do a final quality inspection, but the primary means of ensuring a quality product is delivered is not by waiting until the product is assembled to test it. They build quality in from the start and maintain that quality throughout the manufacturing process.

Prying Open The Case

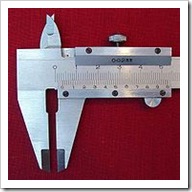

Imagine the inner workings of the phone to the left. This is a very complex piece of technology. Do you think Cisco would wait until they have assembled this phone and then try to pry open the case so that they can insert a set of electrodes to test and see that the circuit board is connected correctly? I certainly hope they don’t. Instead, when a manufacturing company is building something – anything – they start with the idea of preventing defects, not waiting until they are identified and correcting them. Many companies have an active Zero Defects policy where defect prevention is paramount and quality inspection is almost just a verification of what they already know – that the product is defect free.

When a part is stamped out, formed, molded, or otherwise created, it is done so to an exacting specification. After the part has been created, the part is then tested against the same specification to which it was originally built. If the part does not fall within the tolerance and guidance of the specification, it is scrapped and a new one is made. If a series of parts are found to be out of specification, it’s usually a sign that something in the process, tooling, or other portion of the manufacturing process is not right. When this happens, they fix the cause of the problem – whether the machines need to be calibrated, the people running the machines need better instructions or whatever the cause is. In the end, the specifications for the parts were used to create the part, identify whether or not the part was up to standards, and decide whether or not to keep that part.

What’s more, the manufacturing company doesn’t wait until after they start creating parts to create the specification. Rather, they take the time to properly engineer the specifications up front. They take measurements, create prototypes with varying specifications to see what works best, record the success and failure rates of the various specifications that are tried, and use other design and engineering principles to scientifically calculate the exacting specifications that will be used to produce the parts. This occurs at all levels of the product’s design and creation. For every resistor, capacitor and microchip that builds a circuit board, each one of them has their own specifications that have been carefully engineered. If any single capacitor does not meet the specifications, it is not sent to Cisco with the hopes that it works anyways. Only when all of the specifications of each part are met will they solder the parts to the circuit board, creating a subassembly.

What’s more, the manufacturing company doesn’t wait until after they start creating parts to create the specification. Rather, they take the time to properly engineer the specifications up front. They take measurements, create prototypes with varying specifications to see what works best, record the success and failure rates of the various specifications that are tried, and use other design and engineering principles to scientifically calculate the exacting specifications that will be used to produce the parts. This occurs at all levels of the product’s design and creation. For every resistor, capacitor and microchip that builds a circuit board, each one of them has their own specifications that have been carefully engineered. If any single capacitor does not meet the specifications, it is not sent to Cisco with the hopes that it works anyways. Only when all of the specifications of each part are met will they solder the parts to the circuit board, creating a subassembly.

When a subassembly is created, it also has a specification to which it was built. The subassembly then undergoes the same quality assurance process – verification that it meets the specifications and operational requirements. The process continues from here – each subassembly gets connected to a larger system which is built to a set of specifications, with rigorous testing of the larger system as it is built, ensuring it meets the specifications. When the final phone is assembled, we don’t have to worry about whether or not a specific capacitor is soldered to the correct location – we don’t have pry open the case on this phone and insert a set of electrodes to see if the electrical current is flowing correctly. Instead, we only need to plug this phone into the correct connections (an Cisco IP phone system in this case) and verify that the phone actually performs all of it’s functions, according the functional specifications of the phone. There simply is no need to verify the capacitor that was used in the very first step. We know it works because it was built in a system that actively prevents defects.

The manufacturing world is obsessed with testing. They are willing to test from the lowest possible levels of the system, out to the end-product and the behavior that is expected. They do this because the consumers of manufactured products demand perfect. Why, then, are so many software development companies so willing to only test from one end of the process? To only test once, and only from the user interface, just before the product is shipped?

A Specification By Any Other Name

Unfortunately, the software development industry as a whole, is years behind the manufacturing industry. Our definition of quality and success are often skewed and we may consider fifty or more known bugs in a system of moderate to large size to be acceptable. It doesn’t have to be this way, though. We have the technical capabilities of following in the footsteps of the manufacturing industry, and we should.

I’m sure there would be no small number of people that would say we already employ the use of specifications in software development. After all, that’s what the requirements gathering phase is for, right? So many software development companies have put so much effort, time and money into the process of producing a piece of paper that can be understood by humans, and labeled this a specification. The problem we face with paper, though, is how to effectively verify the software against what the paper says. How do we verify that series of software lines and I/O statements that are understood by a computer have actually implemented the human readable text on the paper?

We are fortunate, actually. We don’t have to accept a Word document or a piece of paper as the specification to build to. We have the ability to create executable specifications! We can, and should, be creating specifications that can measure and verify our code. Most people call it test driven development (TDD). Some call it Behavior Driven Development (BDD). I like to think of it as Specification Driven Development (SDD? Not sure if that really exists. And really, do we need another xDD acronym?). We write code that exercises our code in the form of unit tests, integration tests, functional tests, acceptance tests, or whatever you want to call them.

We’re Not Just Stamping Out Parts

One of the major problems that I have with the manufacturing/software development analogy is the obvious statement that we don’t stamp out the same parts over and over again. In spite of my previous comments on this analogy, I now think that we are more analogous to a specific part of manufacturing than I had previously understood. A more accurate representation of software development in the manufacturing world is new product design and development. The parallels work quite well from this perspective. I am not going to expound on this in great detail at this point. It should suffice to say, for the moment, that the process of product development described in Wikipedia is a fairly accurate representation of what we go through for the average software development project.

One of the major problems that I have with the manufacturing/software development analogy is the obvious statement that we don’t stamp out the same parts over and over again. In spite of my previous comments on this analogy, I now think that we are more analogous to a specific part of manufacturing than I had previously understood. A more accurate representation of software development in the manufacturing world is new product design and development. The parallels work quite well from this perspective. I am not going to expound on this in great detail at this point. It should suffice to say, for the moment, that the process of product development described in Wikipedia is a fairly accurate representation of what we go through for the average software development project.

When a manufacturing company is working on a new product, they once again don’t stamp out parts without knowing what they are doing. Many different parts may need to be tested, many different designs may need to be tried, but every one of these is still built to a specification. The major difference is that the specifications used are expected to change over time, until the final specification for the final pieces are accurate enough to produce a production-ready prototype. The same notions can be applied to the software development processes in TDD, with some additional benefits.

Built To Specs, Regression Tests And Change

Change happens. It’s a simple fact of software development. A customer thought they wanted X, but in reality they needed X-1/B – not quite what we originally thought. When this happens, we once again have a significant benefit created by our executable specifications. We only need to identify those specifications that are now wrong, correct them, and change the affected portions of the system to match the new specifications.

Our ability to change is direct evidence to one of the many benefits of TDD: regression tests. Every specification that we write becomes a regression test the moment we fulfill that specification’s requirements. With this in mind, we can work with an almost reckless abandon, free to add features, remove features, fix bugs (because let’s face it – we’re still going to find some issues somewhere in the system) and refactor the system to a higher standard, all without worry of breaking the system. We can act with this level of confidence because we have a safety net in our regression tests. If (when) we do break something in our new efforts, we will be notified the moment we re-execute our specifications – that is, run our regression tests. A specification test will fail and we will have a clear indication of what failed and why. This deep insight into the system gives us even further confidence in correcting any issues that we introduce. When a failed specification test tells you exactly which value from which class is wrong, and the context in which that class was executed is known (the exact input and expected output), pinpointing the problem becomes a rote process. Fixing the issue becomes relatively simple, and we begin to see true productivity improvements in our processes.

Start With Quality, End With Quality

Our industry is currently suffering from a lack of quality. We ship horrendously bad user experiences in products that are late and well over budget, yet we call this a ‘success’. It doesn’t have to be this way. If we change our perspective and start to take some cues from the manufacturing and product design and development world, we can dramatically increase our effectiveness as software developers. We can create high quality, low cost solutions like the world expects from manufacturers. Built to specification is certainly not a silver bullet. It is, however, the definition of quality in a Zero Defect environment.

By employing a built to specification mindset in our software development efforts, we can start with quality and maintain that quality throughout the life of our projects. This is the same process that is undertaken when a manufacturing company is working on new product design and development. It works well, it’s a proven process, and most of all – it makes sense. Build your software to specifications. Just make sure they are executable specifications.