Guidelines for Automated Testing: Defining Test Inputs

There are several simple rules to follow when dealing with test setup for automated tests:

- Data setup should be declarative

- Data setup should be as easy as you can possibly make it

- The inputs for your test belong with your test (it lives inside of it)

Let’s walk through some examples of these rules being applied. As usual, I’m going to use StoryTeller for my examples.

Data setup should be declarative

We use tabular structures to construct our test inputs. It feels a little spreadsheet-like but it’s the most natural way to input a decent sample population.

Let’s consider a sample example of declaring inputs for “People” in a system. For any given person, you will likely need their first/last name and let’s also optionally capture an email address:

| First | Last | |

| Josh | Arnold | josh@arnold.com |

| Olivia | Arnold | olivia@arnold.com |

| Joel | Arnold |

Another important note here: avoid unnecessary setup of data that is unrelated to the test at hand. For example, if lookup values are required for every single test then create a mechanism to automatically create them.

Data setup should be as easy as you can possibly make it

Often times the models that you are constructing aren’t as simple as “first/last/email”. You may have entities with various required inputs, variants, etc. You absolutely do not want to have to go through the ceremony of declaring them in every single test.

We beat this a couple of different ways:

- Use default values whenever we can (e.g., birthdate will always be 03/11/1979 unless otherwise specified)

- Use string conversion techniques to build up more complex objects

In my current project, we have a grid with 30+ fields to maintain. The input that we specify per test leverages default values for field values so that we don’t have to constantly repeat ourselves. This is particularly useful when creating sample populations large enough to test out paging mechanics.

On top of the various fields we must maintain, we have errors that we track per row. Each row can have zero or more errors. Naturally, our test input must be able to support the entry of such errors. Now, rather than creating a separate tabular structure for this, we decided to allow for a particular string syntax that looks something like this:

{field name}: ErrorCode1[, ErrorCode2] {field name}: …

This allows us to input data like:

| First Name | Last Name | Errors |

| Josh | Arnold | FirstName: E100 |

| Olivia | Arnold | NONE |

| Joel | Arnold | NONE |

We can accomplish this in StoryTeller with something like this:

The inputs for your test belong with your test (it lives inside of it)

This is not an attack on the ObjectMother pattern by any means. However, I strongly believe that in automated testing scenarios, the ObjectMother just doesn’t fit. Consider the following acceptance criteria:

- Using the sample database

- Open the grid screen

- Click on 2nd row

- There should be an error on the screen

There are so many things wrong with this that it’s hard to count. Let’s forget about lack of detail and focus on the real question: “What value does this test have?”

If you’re focusing on defining system behavior, then you’ve failed. This doesn’t describe any behavior at all. If you’re focusing on removing flaws, I think you’ve still failed. You’ve identified a problem but you’ve failed to capture the state of the system associated with it.

Here’s my point: tests are most useful when they self-explanatory. I want to pull open a test and have everything that I need right at my finger tips. I don’t want to cross reference other systems, emails, wiki pages, etc. to figure out what data exists for the test.

A well-defined acceptance test should look like this (it should look familiar):

- Test input (system state)

- Behavior

- Expected results

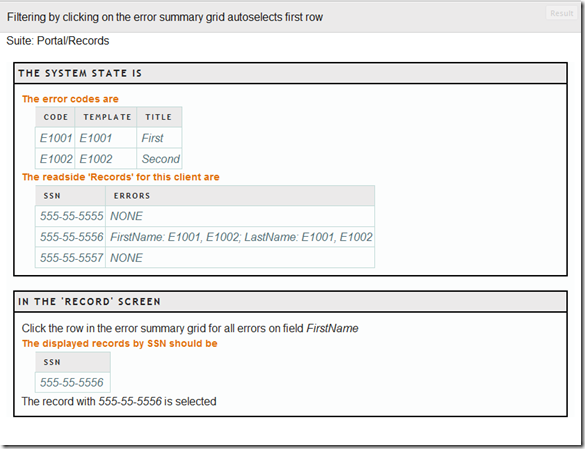

Using StoryTeller for my examples, here’s what a test looks like (a condensed snippet from my current project):

Wrapping it up

Let me reiterate my points here for sake of clarity. Automated tests that are easy to read, write, and maintain follow these rules:

- Data setup must be declarative (think tabular inputs)

- Data setup must be as easy as possible

- The inputs and dependencies of the test live within the expression (see the ST screenshot above for an example)

Next time we’ll discuss how to standardize your UI mechanics.